EXAONE Deep: LG AI Research’s Next-Gen Reasoning Model

LG AI Research has unveiled EXAONE Deep, a breakthrough AI model excelling in mathematical reasoning, scientific problem-solving, and coding efficiency.

LG AI Research has unveiled EXAONE Deep, a breakthrough AI model excelling in mathematical reasoning, scientific problem-solving, and coding efficiency.

The AI race for advanced reasoning models is heating up, and LG AI Research has made a bold move with the introduction of EXAONE Deep. Competing with leading AI reasoning models, EXAONE Deep demonstrates exceptional performance across mathematics, science, and coding challenges.

Notably, the 32B model has achieved international recognition, making its way into the prestigious ‘Notable AI Models’ list by Epoch AI. Let’s take a closer look at its capabilities.

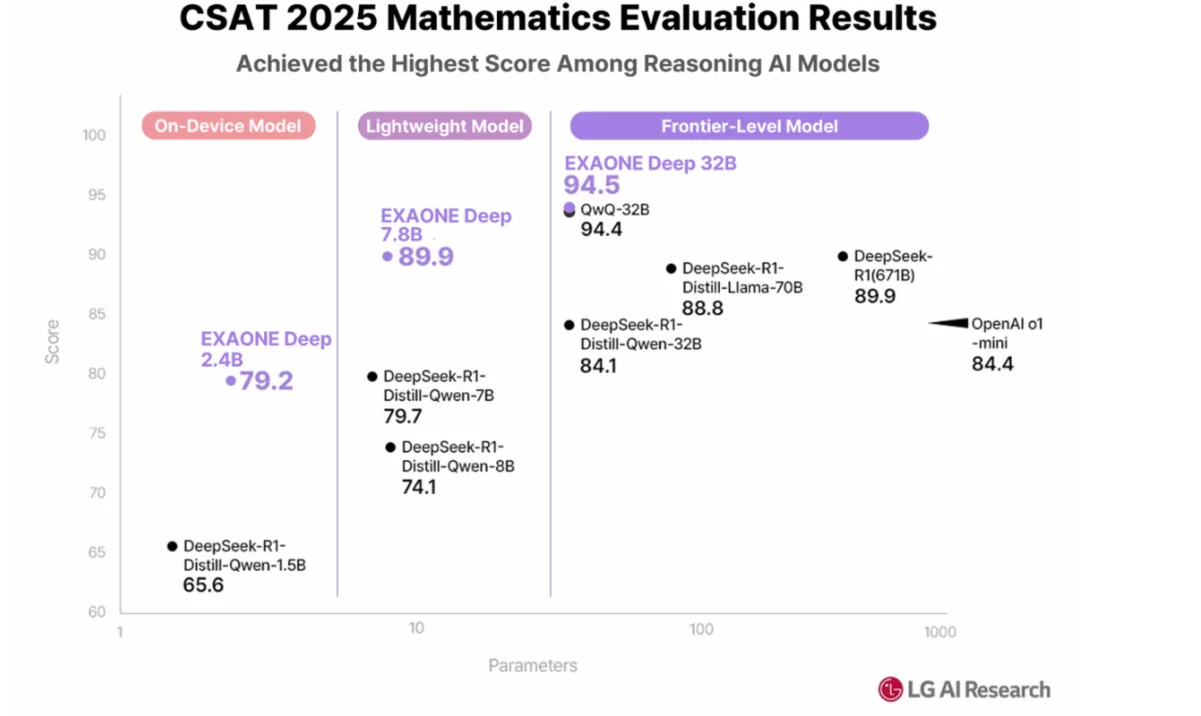

EXAONE Deep’s reasoning capabilities have set new benchmarks in mathematics. Across different model sizes, it has outperformed global competitors:

Remarkably, the 32B model matched the performance of DeepSeek-R1 (671B) in the 2025 AIME exam, showcasing its efficiency in logical problem-solving.

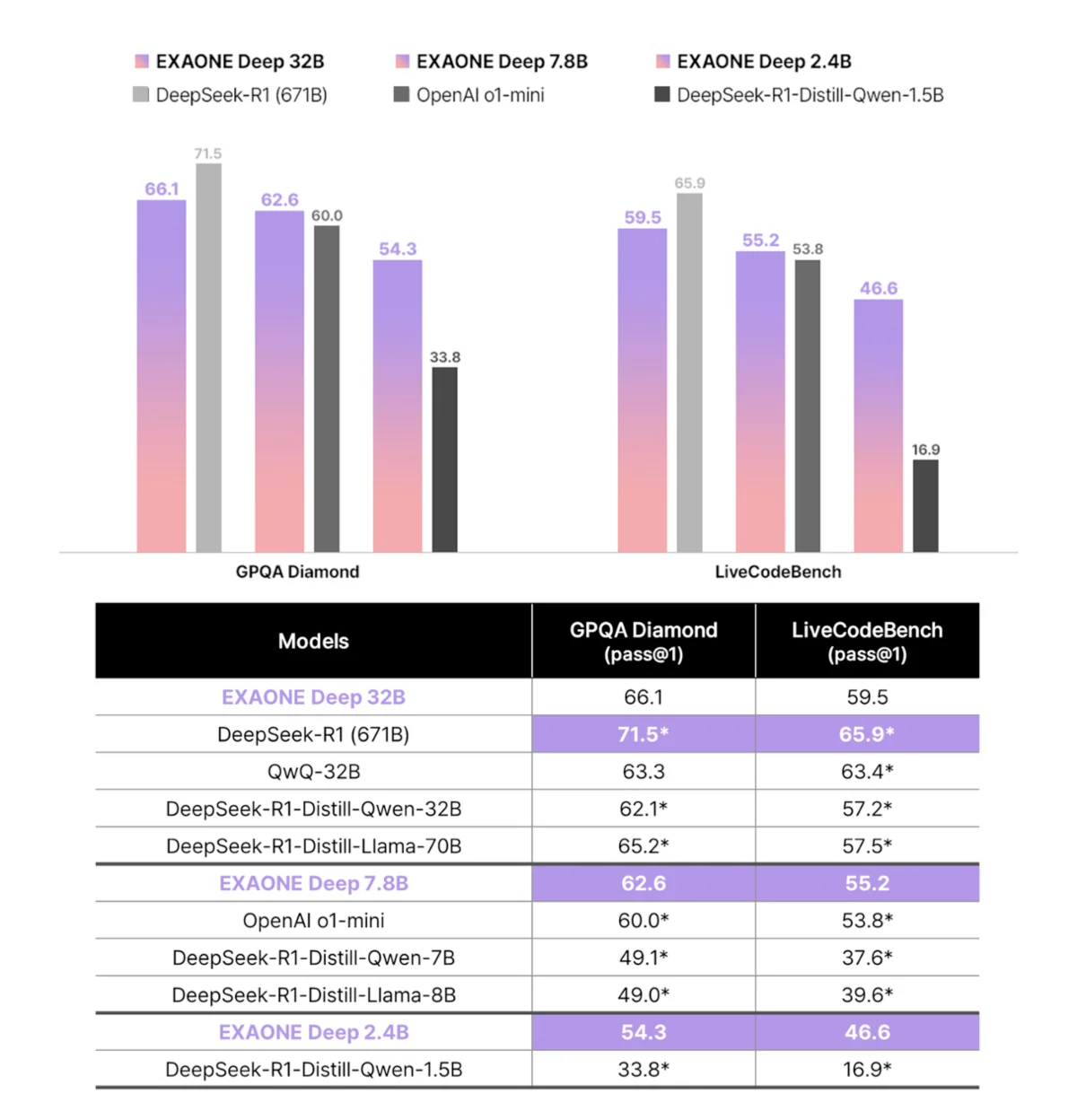

EXAONE Deep has also shown excellence in professional science reasoning and coding applications:

The lightweight 7.8B and 2.4B models also ranked first in their categories, continuing the strong performance trend.

LG AI Research has released the following performance metrics for EXAONE Deep models:

| Benchmark | 32B Model | 7.8B Model | 2.4B Model |

|---|---|---|---|

| MMLU Score | 83.0 | — | — |

| Math General Competency | 94.5 | 94.8 | 92.3 |

| AIME 2025 | 90.0 | 59.6 | 47.9 |

| GPQA Diamond | 66.1 | — | — |

| LiveCodeBench | 59.5 | — | — |

EXAONE Deep has cemented itself as a leading AI reasoning model, excelling in mathematical, scientific, and coding problem-solving. Its performance efficiency, even at smaller sizes, makes it a strong competitor against much larger AI models.

As AI research progresses, LG AI Research’s commitment to improving EXAONE Deep’s capabilities suggests a promising future where AI tackles complex problems across various domains.